Code review

What SIGMA's code review covers

When a developer submits a code-containing category, SIGMA performs a multi-stage review that goes significantly beyond static linting. The review has three distinct layers that run in sequence.

Layer 1 — Static pre-processor

The first layer is a deterministic analysis engine that runs before any AI model is involved and before any cost is incurred. It examines the structure and content of the submitted code without executing it.

The pre-processor extracts a structured threat report covering:

Import and dependency analysis. Every import statement and every dependency declared in the manifest is catalogued. Exact pins are accepted directly. Public-registry version ranges are resolved to one exact auditable version for the submission, and the final certificate discloses both the declared spec and the exact audited version. Non-resolvable declarations, wildcard tags, and non-public registry sources are still flagged immediately.

Shell and process execution patterns. Any code that invokes system shells, spawns child processes, or calls operating system utilities is identified and mapped to the specific files and lines where it occurs. The pre-processor also determines whether these patterns are reachable from the declared entry point or exist only in unreachable dead code. Both cases are reported; the council weighs them differently.

Credential and environment access. All reads of environment variables are catalogued. If a variable read is subsequently passed to a network call — the classic credential exfiltration pattern — this is flagged as a high-severity signal regardless of what the developer says the variable contains.

Network call mapping. Every outbound network call is extracted, including the static URL or domain if it can be determined. For SKILL+CODE submissions, this list is compared against the external hosts declared in the SKILL.md manifest. Calls to undeclared hosts produce a manifest delta finding.

Filesystem access. Read and write operations are catalogued. Write operations outside expected output paths are flagged.

Secret signal detection. Hardcoded strings matching patterns for API keys, tokens, and credentials are identified as critical findings regardless of whether they appear to be real values or placeholders.

Complexity assessment. The pre-processor assigns a complexity tier to the submission — standard, complex, or high-complex — based on measurable properties: file count, total size, number of network call patterns, number of shell execution patterns, and dependency count. This tier determines the base audit fee and is recorded in the certificate.

The pre-processor also enforces hard limits. Submissions that exceed the file count limit, total size limit, or single-file size limit are rejected before any AI model is consulted and before any fee is charged. The rejection message explains exactly which limit was exceeded and by how much.

Layer 2 — Senior sandbox

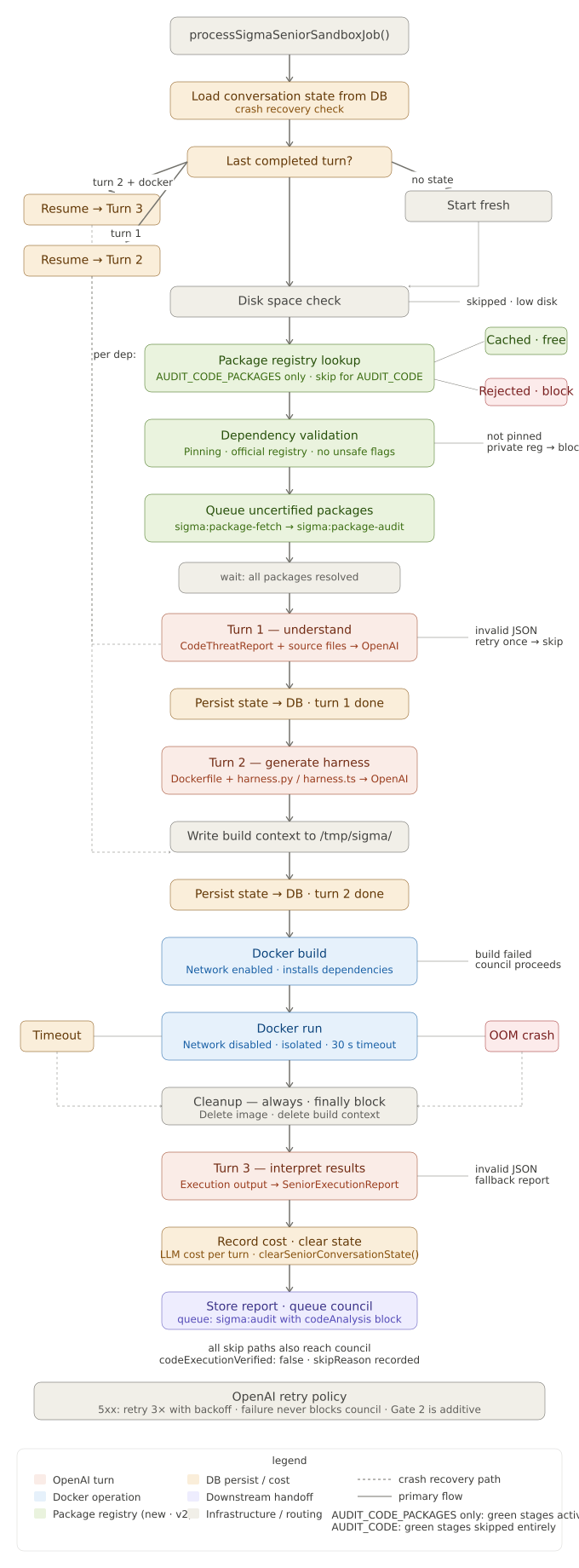

The second layer executes the submitted code in a controlled environment and captures its actual runtime behaviour.

SIGMA uses a dedicated AI analyst — referred to as the Senior — to manage this execution. The Senior operates in three stages:

Understanding the code. The Senior receives the static analysis output from Layer 1 alongside the content of the entry point file and its most important imported files. It produces a structured understanding of what the code claims to do, what external dependencies it requires, and what aspects of its behaviour can be tested through sandboxed execution.

Generating a test harness. Based on its understanding, the Senior generates a minimal test harness that exercises the code's primary functionality. The harness uses mock values for environment variables — never real credentials — and intercepts all network calls, recording the attempt and the destination rather than allowing a real connection. This prevents the code from exfiltrating data or downloading additional payloads during the test, while still capturing the evidence that a connection was attempted.

Interpreting execution results. After the code runs, the Senior receives the full execution output: standard output, error output, exit code, any network connections that were attempted and intercepted, any filesystem writes that were attempted, and any process spawns. It produces a structured execution report with risk flags and a verdict: the code appears safe, appears suspicious, or appears likely malicious.

Sandbox isolation. The execution environment enforces strict resource and isolation boundaries:

- No network access during execution. The container has no internet connectivity at runtime. Code that attempts a network call encounters a connection failure, which is intercepted and logged.

- Read-only filesystem. The container cannot write to its own filesystem except to a small in-memory temporary directory.

- No privilege escalation. The code runs as a non-privileged user with all Linux capabilities dropped.

- Hard memory and CPU limits. The container is killed if it exceeds its memory allocation. A fork bomb or infinite loop cannot exhaust the server's resources.

- Hard execution timeout. If the code does not exit within 30 seconds, the container is forcibly terminated.

If the Senior fails for any reason — API unavailability, inability to generate a harness, execution timeout — the submission is not blocked. The council proceeds with the Layer 1 static analysis data. The certificate records that sandboxed execution was skipped and explains why.

See also: Sandbox isolation.

Layer 3 — SIGMA council

The third layer is the same multi-agent council that reviews SKILL and SKILL+API submissions, extended with additional instructions for code review.

The council does not receive the raw source code. It receives the structured outputs of Layers 1 and 2: the threat report and the execution report. This keeps the council prompt within its context budget regardless of how large the submitted codebase is, and it means the council's job is interpretation rather than code reading.

The council evaluates:

- Are the dependency risk signals justified by the code's declared purpose?

- Is shell access limited and controlled, or does user input reach a shell call?

- Does the runtime behaviour match what the developer claimed in their advisory note?

- For SKILL+CODE submissions: is the code consistent with the manifest?

- What is the overall risk level given all findings together?

The council produces a verdict — approved, unsafe, or contested — using the same Phase 1 and Phase 2 consensus logic used for all SIGMA submissions.

What the certificate covers

A code certificate records:

- The exact commit SHA of the reviewed repository

- A deterministic hash of all files included in the review

- The complexity tier assigned by the pre-processor

- Whether sandboxed execution completed or was skipped

- The Senior's execution verdict

- The dependency pinning status

- For SKILL+CODE: whether the code is consistent with the manifest

- Any risk flags from execution that the council retained as warnings

- A disclaimer that the certificate covers the code at the specified commit and does not extend to future versions or modified copies

The certificate is committed to Monad mainnet. The commit SHA and file hash are included in the on-chain record, making it impossible to alter the certificate after issuance or to claim that a different version of the code received the certificate.

For field-level detail, see Code certificate structure.